- Home

- About

- Contact

- How to download free games for pc windows 10 2017

- White samick piano for sale craigslist

- 4k graphics card for mac pro 5-1

- Get the sims 4 free mac newest update

- Sims 4 cat and dog surgery

- Uml editor ipad

- Android web server lamp

- Best online ftp editor

- Pirate adobe digital edition books

- #4K GRAPHICS CARD FOR MAC PRO 5.1 MAC OS#

- #4K GRAPHICS CARD FOR MAC PRO 5.1 PC#

- #4K GRAPHICS CARD FOR MAC PRO 5.1 WINDOWS#

Although you can enable DLSS with games in GeForce Now, this is the first time Nvidia has explicitly confirmed that the technology helps power its cloud gaming platform.

#4K GRAPHICS CARD FOR MAC PRO 5.1 PC#

As of now, the increased resolution is only available for GeForce Now’s RTX 3080 tier.ĭLSS has been a staple of high-end PC gaming for the past couple of years, leveraging the dedicated Tensor cores inside RTX graphics cards to upscale games with AI.

#4K GRAPHICS CARD FOR MAC PRO 5.1 WINDOWS#

Happy to attach output from any additional commands if that would assist.As part of the weekly GFN Thursday, Nvidia announced that 4K streaming is coming to the GeForce Now apps on Windows and Mac, which is enabled by Nvidia’s Deep Learning Super Sampling (DLSS) technology. I'm also going to include my OpenCore ist in case that is of any help. Hoping that someone can point me in the right direction.

I've spent time playing with the EDIDUtil created by along with the more simplified patch-edid script to see if limiting the EDID to RGB mode only would solve the issue, but it simply allows for 4k60 RGB 4:4:4 at 8-bits. I've also attempted setting or changing several other parameters in WhateverGreen, such as the "enable-dpcd-max-link-rate-fix," setting "dpcd-max-link-rate" to "HgAAAA=" (0x1E000000), and the "enable-hdmi20" flag, among others, but all to no avail. I've tried changing the device id of the card in WhateverGreen in order to see if the OS was somehow limiting the display card's output due to the model, but trying various other device ids with Polaris chips (and even some others) has yielded nothing different. I'm attaching the complete output from the AGDCDiagnose command.

So it seems that adequate bandwidth is available to support 4k60 4:4:4 at 10-bits unless I'm missing something somewhere.

The active adapter's product page clearly states that it is capable of 4k60 4:4:4 at 10-bits: The DP1.4 to HDMI 2.1 cable claims to support 4k120 HDR, which should consume around ~24Gbps at 4:2:0, and the other DP1.4 to HDMI 2.1 active adapter with HDMI 2.1 cable claims to support 4k120 and 8k60 HDR, which should consume ~40Gbps. The card, display, and cables are all capable of outputting, transmitting, and displaying 4k60 4:4:4 at 10-bits, and likely at 4k120 from what I can discern.Īccording to my research, 4K60 4:4:4 10b-HDR = 22.28Gbps, which is outside of the range for HDMI 2.0b at ~18Gbps, so I can eliminate outputting a signal from the card's HDMI port (unless using DSC, which I believe lowers the 22.28Gbps back to ~18Gbps, possibly lower?), leaving me with just the DisplayPort 1.4 outputs. HDR also functions in at least the 10-bit modes. I have been able to get 4k60 YCbCr 4:4:4 with 8-bit color, 4k30 YCbCr 4:4:4 with 10-bit color, 4k60 YCbCr 4:2:0 with 10-bit color, 4k60 RGB 4:4:4 with 8-bit color, but in no event have I achieved 4k60 YCbCr 4:4:4 with 10-bit color or 4k60 RGB 4:4:4 with 10-bit color. I have tested running HDMI from the card to the display with an HDMI 2.1 cable, using a DP 1.4 to HDMI 2.1 cable ( this one, which I believe is an active adapter, although I have been unable to confirm) from the card's DisplayPorts to the display's HDMI ports, using this active DP 1.4 to HDMI 2.1 adapter in combination with an HDMI 2.1 cable, and using this active DP to HDMI 2.0 adapter. I believe that I've eliminated cable issues from the equation. The card supports DisplayPort 1.4 and HDMI 2.0b. I'm using an AMD RX480 8GB card (and have an identical secondary card that I've tested with).

#4K GRAPHICS CARD FOR MAC PRO 5.1 MAC OS#

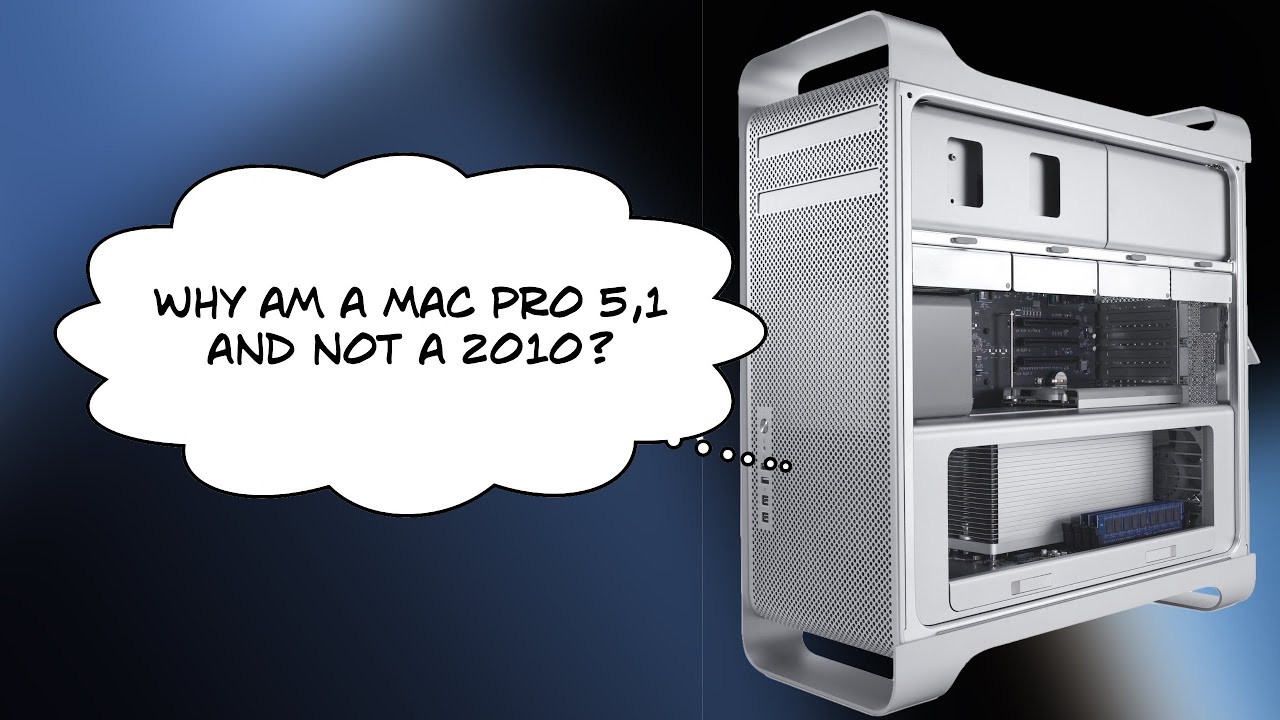

I'm running Mac OS 12.3.1 (Monterey) on a 2012 Mac Pro 5,1 using OpenCore and WhateverGreen. I've spent countless hours reading through various posts on many different forums and testing possible solutions, but have drawn a blank. I'm having an issue achieving 4K resolution at 60Hz with chroma 4:4:4 and 10-bit color. Building a CustoMac Hackintosh: Buyer's Guide